Automatic Norms

Some new ideas I want to explain start with a 2000 paper on Taboo Tradeoffs. (See also newer stuff.) So I’ll review that paper in this post, and then I’ll explain my new ideas in the next post.

In Experiment 2 of the 2000 paper, each of 228 subjects were asked to respond to one of 8 scenarios, created by three binary alternatives. All the scenarios involved:

Robert, the key decision maker, was described as the Director of Health Care Management at a major hospital who confronted a “resource allocation decision.”

Robert was either asked to make a tragic tradeoff, where two sacred values conflicted, or a taboo tradeoff, where a sacred value was in conflict with a non-sacred value. The tragic tradeoff:

Robert can either save the life of Johnny, a five year old boy who needs a liver transplant, or he can save the life of an equally sick six year old boy who needs a liver transplant. Both boys are desperately ill and have been on the waiting list for a transplant but because of the shortage of local organ donors, only one liver is available. Robert will only be able to save one child.

The taboo tradeoff:

Robert can save the life of Johnny, a five year old who needs a liver transplant, but the transplant procedure will cost the hospital $1,000,000 that could be spent in other ways, such as purchasing better equipment and enhancing salaries to recruit talented doctors to the hospital. Johnny is very ill and has been on the waiting list for a transplant but because of dire shortage of local organ donors, obtaining a liver will be expensive. Robert could save Johnny’s life, or he could use the $1,000,000 for other hospital needs.

Robert was said to either find this decision easy or difficult:

“Robert sees his decision as an easy one, and is able to decide quickly,” or “Robert finds this decision very difficult, and is only able to make it after much time, thought, and contemplation.”

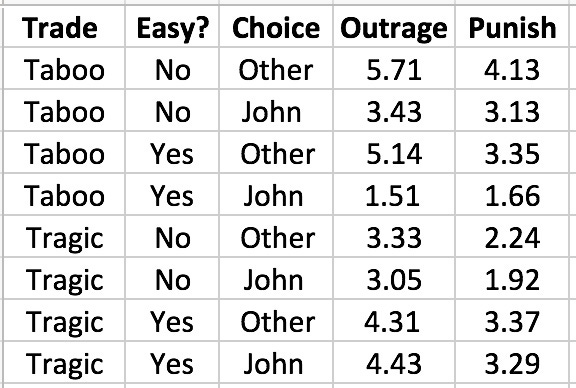

Finally, Robert was said to have chosen to save Johnny, or to have chosen otherwise. Subjects were asked to rate Robert’s decision and describe their feelings about it in 8 ways. They were also asked to make 3 decisions on actions regarding Robert, including dismiss from job, punish, and end friendship. Using factor analysis all these responses were combined into an outrage factor, mainly weighted on 6 of the ratings and feelings, and a punish factor, mainly weighted on the 3 actions. These factors were on a 1-7 point scale. Here are the average factor values for the eight possible scenarios:

In the case of a taboo tradeoff, Robert is less likely to be punished for saving Johnny than for not. We have a strong social norm against trading sacred things for non-sacred things, and Robert is to be punished if he violates this taboo. When Robert makes a sacred tradeoff, it is as if he must violate a norm no matter what he does. In this case, he is punished much more if he treats this as an easy choice; norm violation must be done in a serious thoughtful manner.

However, when Robert makes a taboo tradoff, he is punished much more if he treats this as a difficult choice. In fact, he is punished almost as much for saving Johnny after much thought as he is for not saving Johnny after little thought! It is worse to do the wrong thing after careful thought than after little thought.

Years ago, this result helped me to understand the political reaction when in 2003 my Policy Analysis Market (PAM) was accused of trying to let people bet on terrorist deaths.

PAM appeared to some to cross a moral boundary, which can be paraphrased roughly as “none of us should intend to benefit when some of them hurt some of us.” (While many of us do in fact benefit from terrorist attacks, we can plausibly argue that we did not intend to do so.) So, by the taboo tradeoff effect, it was morally unacceptable for anyone in Congress or the administration to take a few days to think about the accusation. The moral calculus required an immediate response.

Of course, no one at high decision-making levels knew much about a $1 million research project within a $1 trillion government budget. If PAM had been a $1 billion project, representatives from districts where that money was spent might have considered defending the project. But there was no such incentive for a $1 million project (spent mostly in California and London); the safe political response was obvious: repudiate PAM, and everyone associated with it. (more)

Today, however, my interest is in what these results imply for our awareness of where our norm feelings come from, and how much they are shared by others. These results suggest that when we face a choice, the categorization of some of the options as norm violating is supposed to come to us fast, and with little thought or doubt. Unless we notice that all of the options violate similarly important norms, we are supposed to be sure of which options to reject, without needing to consult with other people, and without needing to try to frame the choice in multiple ways, to see if the relevant norms are subject to framing effects. We are to presume that framing effects are unimportant, and that everyone agrees on the relevant norms and how they are to be applied.

Apparently the legal principle of “ignorance of the law is no excuse” isn’t just a convenient way to avoid incentives not to know the law, and to avoid having to inquire about who knows what laws. Regarding norms more generally, including legal norms, we seem to think “ignorance of the norms isn’t plausible; you must have known.”

If this description is correct, it seems to me to have remarkable implications. Which I’ll discuss in my next post. (Unless of course you figure them all out in the comments now.)

Thank you for the quick reply!If I follow the arguments from the paper right, the accuracy would only increase if the market doesn't limit participation and size of trades too much. The accuracy would increase as long as the additional volume of trading attracted by manipulation outweighs the manipulation itself.However, as the market volume grows, it becomes possible to benefit from insider trading. Such market could be then used to finance the activities that result in unlikely outcomes by betting on them. Does this make sense?

Manipulation makes prices MORE accurate, even in small thin markets: http://www.overcomingbias.c...